2017 was a special year for Deep Learning. In addition to the great experimental results obtained thanks to the algorithms developed, the Deep Learning this year has seen its glory in the release of many frameworks. These are very useful tools for developing numerous projects. In the article you will see an overview of many new frameworks that have been proposed as excellent tools for the development of Deep Learning projects.

PyTorch

Among all the frameworks, perhaps the most successful and surprised everyone for its potential is PyTorch, the framework for Deep Learning proposed by Facebook.

PyTorch is a Python package that mainly provides two high-level features:

- Tensor calculus (like Numpy) with GPU acceleration

- Deep Neural Networks built on a tape-based autograd system

In fact, if you choose to use this package, certainly you do it for these two reasons. The first is to use it instead of Numpy, in order to make the most of the computational power of the GPUs. The second is to use a Deep Learning research platform that can provide maximum performance and flexibility.

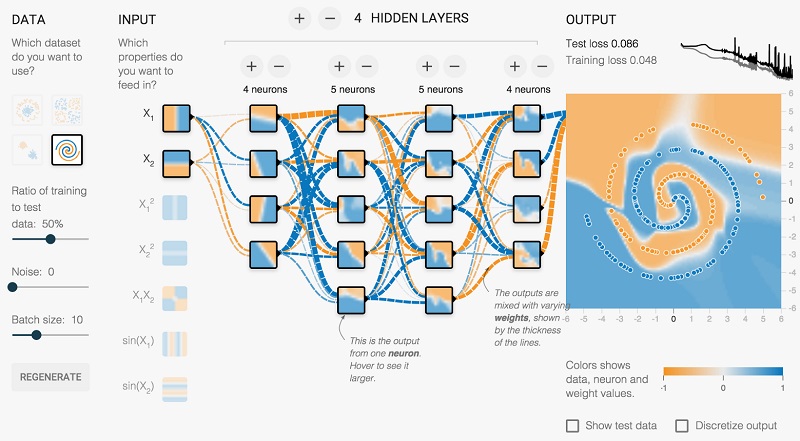

PyTorch is a library that is mainly based on tensors. Certainly if you have already used numpy you will certainly have had to deal with tensors and tensor calculus. But this library will allow you to make the most of both your CPUs and GPUs available, to the best it can, thus having a huge calculation speed.

The dynamic neural networks used are based on a tape-based autograd system. In fact PyTorch has only one way to construct the neural networks, that is to use and re-run a tape recorder. Other very famous frameworks like Theano, TensorFlow have a static view of the world: they build a neural network once and for all and then reuse the same structure over and over again.

With PyTorch, a technique called Reverse-mode auto-differentiation is used, which allows to modify the behavior of the neural network in an arbitrary way.

During the last year, PyTorch has received a lot of attention from many researchers especially in the Natural Language Processing, preferring it to other frameworks for its high dynamism.

Tensorflow

Like Facebook, Google recently introduced TensorFlow, releasing its first Tensorflow 1.0 version in February 2017 with a stable and compatible API. Currently Tensorflow is at version 1.5.

In addition to the main framework, several additional libraries have been released, including Tensorflow Fold for dynamic calculation charts, Tensorflow Transform for input data pipelines, and DeepMind’s higher-level Sonnet library.

And given the great success of PyTorch, the Tensorflow development team recently announced that it will soon be released a new version that will work very similar to PyTorch.

TensorFlow is a library uses graphs (data flow graphs) to perform the mathematical calculations, in these graphs the data flow being gradually processed. Each node (nodes) represents mathematical operations, while the connections (edges) between the nodes represent the multidimensional (tensor) data arrays. From here comes his name TensorFlow.

The flexible architecture of this library allows you to perform calculations using one or more CPUs or GPUs using only one API.

Other frameworks

Like Google and Facebook, many other companies are developing and working on Machine Learning frameworks.

Apple has announced that its reference library for machine learning will be CoreML.

Uber has released Pyro, , a programming language “Deep Probabilistic” together with Michelangelo , which will be its internal platform for Machine Learning

Amazon has instead presented Gluon, a high-level API for machine learning available for MXNet, the Apache Deep Learning framework.

Framework for Reinforcement Learning

But the new frameworks do not end there. In fact, what we have seen so far have a general purpose for Deep Learning, there are also more specialized frameworks, in particular for Reinforcement Learning.

- OpenAI Roboschool is an open-source software for robot simulation

- OpenAI Baselines is a set of Reinforcement Learning algorithms.

- Tensorflow Agents contains an optimized infrastructure to instruct Reinforcement Learning agents using Tensorflow.

- Unity ML Agents allows researchers and developers to create games and simulators using Unity Editor and instructing them using Reinforcement Learning.

- Nervana Coach allows experimentation with the Reinforcement Learning algorithms.

- Facebook’s ELF is the game search platform.

- DeepMind Pycolab is a game engine for a customizable gridworld.

- Geek.ai MAgent is a research platform for many reinforcement learning agents.

Other frameworks

With the aim of making Deep Learning more accessible, there are some very useful frameworks on the web, such as Google’s deeplearn.js and MIL WebDNN.

Theano’s death

2017 was the year of Deep Learning, and it brought us great innovations with the introduction and release of so many frameworks and libraries. But a very popular framework for all is dead: Theano. In fact it has been announced to everyone that 1.0 will be the last released release.

ONNX

But the news certainly does not end here. Given the large number of frameworks present for Deep Learning and Machine Learning in general, Facebook and Microsoft have announced ONNX an open format for sharing deep learning models through frameworks.

In fact ONNX all developers can develop and represent Deep Learning models using the different tools or libraries present today and choose the combination that best suits your needs. It is also possible to develop using a model and then replace it with another later.

Conclusions

As you can see from the article, 2017 was the year of Deep Learning. Every big company (Uber, Facebook, Google, Amazon) has developed and released one or more frameworks that allow to develop and implement Deep Learning. So 2018 promises a year full of development for the use of all these tools made available.

[:]